Discord plans to set every user's account to teen-by-default — unless you're willing to undergo an invasive age verification process.

On Monday, Discord, a popular social app, announced that it will be rolling out "enhanced teen safety features." According to the company, beginning in March.

"[A]ll new and existing users worldwide will have a teen-appropriate experience by default, with updated communication settings, restricted access to age-gated spaces, and content filtering that preserves the privacy and meaningful connections that define Discord."

This means users may be required to complete an age verification process to access sensitive content. According to Discord, that includes "age-restricted channels, servers, or commands and select message requests."

If you choose to opt out of the age verification process, you'll have the following restrictions placed on your account, outlined by Discord:

- Content Filters: Discord users will need to be age-assured as adults in order to unblur sensitive content or turn off the setting.

- Age-gated Spaces - Only users who are age-assured as adults will be able to access age-restricted channels, servers, and app commands.

- Message Request Inbox: Direct messages from people a user may not know are routed to a separate inbox by default, and access to modify this setting is limited to age-assured adult users.

- Friend Request Alerts: People will receive warning prompts for friend requests from users they may not know.

- Stage Restrictions: Only age-assured adults may speak on stage in servers.

The company says age verification will be done via one of two methods: on-device facial age estimation from a video selfie, or submitting a form of ID to one of Discord's "vendor partners."

If the second one is raising any alarm bells, it should. Almost exactly four months ago, in October 2025, a severe data breach that may have resulted in 2.1 million Discord users' passports and driver's license photos being stolen by hackers.

Discord, for its part, said only 70,000 users were targeted.

The hack didn't target Discord directly, but rather a third-party customer service vendor, 5CA Systems. 5CA Systems maintains that it was not at fault.

Either way, Discord is assuring users that this privacy-forward user verification process is perfectly safe. We're skeptical.

I would like to point out that I, personally, think that keeping kids safe — especially on Discord is a great idea. I just think that there's a difference between solving problems or making messes, and I believe Discord may be doing the latter.

But lets take a look at why Discord is going about it this way.

Welcome to the new internet

You might think that this kind of age verification is inconvenient. You might even think this is unwise, given Discord's history of data breaches.

You'd be right on both counts. Unfortunately, this is the direction the internet is headed in.

The story has been building for years, but the grist is this: U.S. lawmakers have long been searching for a way to hold big companies accountable for child safety online. While this is a good idea in theory, like most government-versus-tech solutions, it largely ends up being a bigger hassle for the end user.

The latest attempt was the May 2025 App Store Accountability Act (ASAA). It was designed to give parents more tools to protect their children online.

Of course, the main way they wanted to do this was by leaning on Google and Apple. The ASAA, which still hasn't passed at the federal level, would require age verification at the app marketplace level.

While it hasn't passed federally, a handful of states have attempted to pass their own versions. Currently, Utah and Louisiana have some version of age verification requirements.

A federal judge placed an injunction on Texas' state law in December.

And, we at AppleInisder assume that this ham-fisted attempt will continue to trudge forward in one capacity or another, either federally or state-by-state.

The extant tools

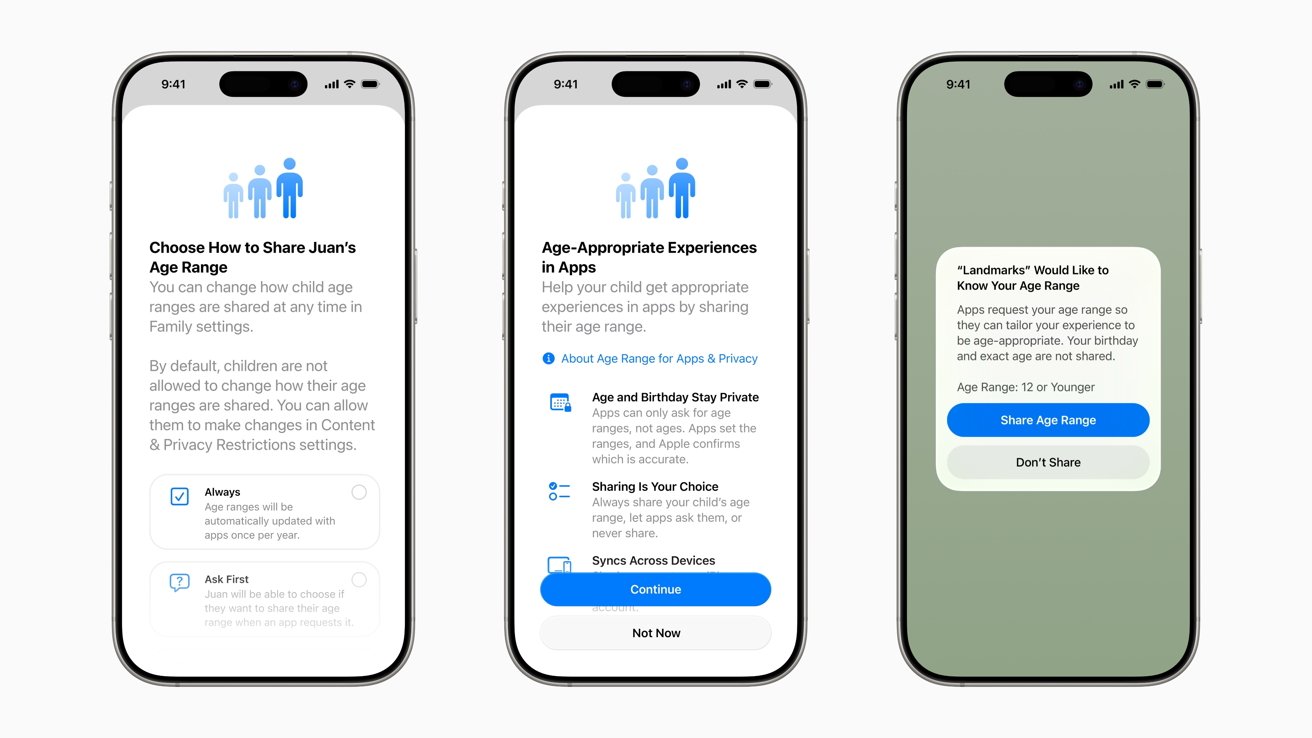

Apple's already had tools for this scenario in place for quite some time. Parents can already set up devices for underage users, creating a "child account," which can be heavily managed, and managed remotely by a parent account.

This includes screen time limits, content and privacy restrictions, and limiting which apps appear in the App Store. It also helps to hide explicit content in podcasts, music videos, and Apple Books.

And if that weren't enough effort on Apple's part, Apple has made setting up child accounts even easier in iOS 26. This includes a simplified setup process, expanded age ratings, and new communication limit settings.

And Google has its own version of parent managed child accounts too. Both companies have been trying to stay ahead of the curve in keeping kids safe online.

Of course, these current tools are opt in, and require parents to set them up properly. This, by the way, is the reason the federal government wants more control over the situation.

One final note on Discord

Discord is a cross-platform social communication app. Not only can you access Discord from your iPhone or Android smartphone, you can access it from tablets and desktops, too.

There's even a browser-based version of Discord, allowing users to utilize the app from a list of supported browsers.

As a result, Discord can't rely solely on Apple or Google's verification tools in every case. This is especially true for younger users who may access Discord exclusively in the browser.