Even the folks behind generative AI writing are embarrassed at how bad it is, but Grammarly ripping off the voices of well-known modern writers is indicative of a much larger problem.

Apparently, Grammarly had a feature that encouraged users to rip off other well-known writers' styles. TechCrunch has a great piece on it, in which you find out that Grammarly would offer "expert review" — sans experts.

It seems that, as you wrote, the tool would pop in and suggest revisions from the perspective of experts. Of course, the experts in question, like Platformer's Casey Newton didn't know this was happening.

And the revisions offered were, essentially, "if you want to sound exactly like this writer, here's how you do it."

That's pretty jarring to wake up to. That's probably why the company pulled the feature down, claiming that they "fell short" on the execution.

Grammarly, frankly, should have known better. The fact that they launched this feature without any apparent consideration points to a lack of common sense at the company.

It's always opt out, and never opt in

This was all news to me, though. In August 2025, I turned all Grammarly's generative AI features off.

You see, it had opted everyone into the "new" Grammarly experience. The new experience where every time you breathed in Grammarly's direction, it would have three or four "helpful" suggestions.

Dunno who needs to hear this, but if you hate the changes @grammarly made to the editor, you can opt out of the AI features in your profile customization settings. Account > Settings > Feature Customization. Toggle it off. pic.twitter.com/rkeiZEewc5

— Amber @ AppleInsider (@Amber_M_Neely) August 14, 2025

I didn't turn them off it because I thought the generative features were amoral. I did it because I thought the feature, and I say this with my chest, sucked.

Now I think they're both amoral and suck. A new best, or maybe worst — I'm not entirely sure if we score this like hockey or golf.

I probably still wouldn't have seen the feature in the first place. It's paywalled behind Grammarly's arguably prohibitively expensive subscription fee, which I refuse to pay, because it keeps shoving AI down my throat.

And yet, for the brief time that the generative AI features were enabled on my account, Grammarly would chime in, all the time, to fix my writing. But it always suggested fixing it in the same way, with the same tone, and the same pacing. And, it was sometimes grammatically wrong.

I don't love mechanical-sounding writing. I think it gets real old, real fast.

I guess Grammarly thought it did, too, considering that it was now figuring out how to encourage people to write like other journalists. Apparently, the rule "those who can, do; those who can't, teach" applies to generative AI, too.

For some of you, you'll probably understand why this is frustrating for a writer. Others may not think it's such a big deal.

Either way, I've pulled out the soapbox, dear reader.

Writing as a honed skill versus writing as a necessary evil

This will surprise zero percent of the people reading this, but I was an insufferable little academic in both high school and college. When a teacher assigned a paper, a wave of relief would wash over me.

Papers were easy A's. I'd rather do a paper or a presentation than a group project or, God forbid, a math test.

While I have a natural talent for certain kinds of writing, it's not like it's a skill I was born with. I've honed the skill through years of academic and professional experience.

I haven't read hundreds of books in my four decades on this earth just to use the same ten-cent adjectives as the next guy over. My ability to write is something I actively nurture.

Talent isn't a static object. You can always actively work harder to get better at your craft.

But that is a two way street; you can also always get worse by cutting corners. That's the reason I will not let myself use any generative AI when I write.

I've known writers who started using AI just to "help" get through assignments they didn't like. A lot of these writers now have a hard time writing without "outlining" it in ChatGPT, Claude, or Gemini first.

Humans are like water. We like the path of least resistance.

Sometimes you have to force yourself to struggle. It's good for you, I promise.

Besides, what's wrong with the old way of doing things? I personally enjoy drinking too much coffee, Googling the word "juxtaposed" to make sure I haven't been using it incorrectly for the last 25 years, and saying a sentence out loud until it loses all meaning.

Maybe that's why it sticks in my craw the way it does when people defend AI writing. Have I spent my entire life at a keyboard just to be replaced by a robot?

It's also critical that you realize I am not saying that I'm the best writer. You'll notice that the spot on my wall that I've left for my Pulitzer remains empty.

But I am better than generative AI. I will die on this hill.

And I think that's where the line is drawn for most people.

If you take pride in your ability to write, the idea disgusts you. If you don't, at worst you see generative AI as a net neutral.

The problem is, however, when those two types of people begin inhabiting the exact same space.

What happens when generative AI becomes the most dominant content on a platform

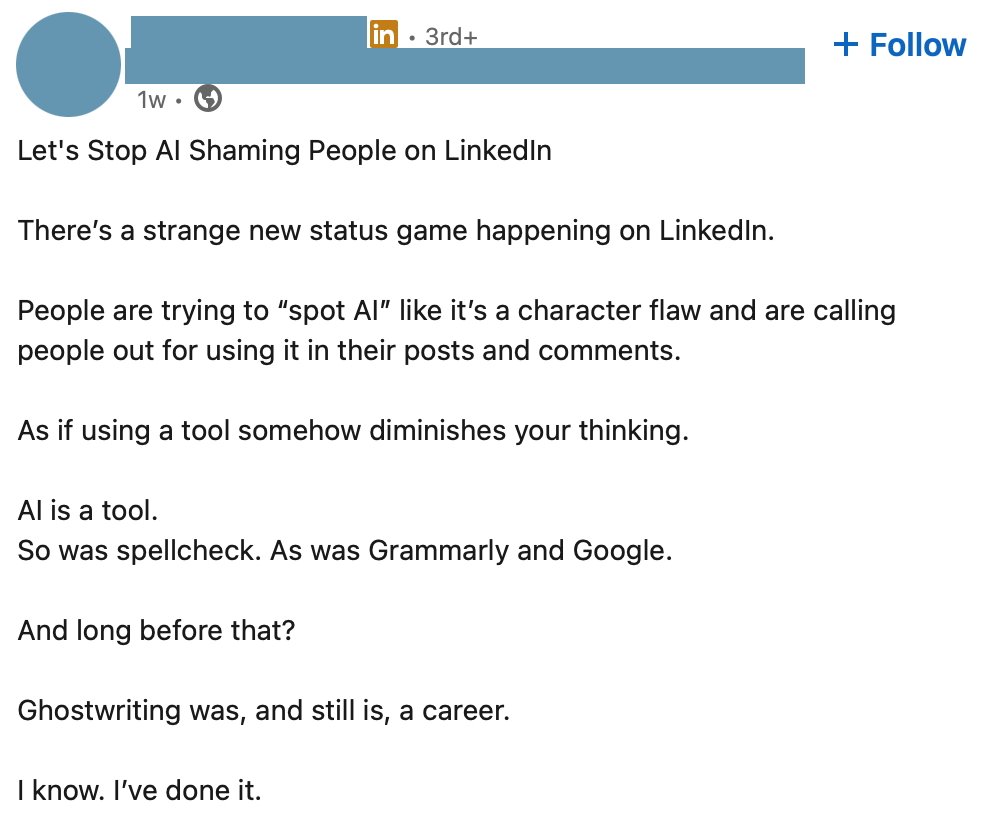

I spend too much time on LinkedIn. I enjoy promoting my own writing and checking in on the writing of others I follow.

LinkedIn, unfortunately, also seems to be keen on trying to give me a stroke. In the last six months or so, the entire platform has become overrun with AI content.

And now most of the AI content is centered squarely around whether or not it's ethical to use AI in the first place.

And most of the time, the posts are in one of two camps. You'll get a lot of AI posts where someone is defending the use of AI as a brainstorming tool.

Well, technically, it's ChatGPT or Claude defending the use of AI. The only thing the author did was write the prompt.

Being able to write a prompt makes you a good writer the same way ordering food off of DoorDash makes you a good cook. I get it, you picked the restaurant, and you made a really innovative choice, swapping the French fries for the steamed broccoli.

The point is, you didn't make it. You created nothing.

The other camp is people generating an AI post saying that you have to make your writing "shitty" to get it to read as human. Apparently, people legitimately think that if you spell things correctly, people assume you're using AI.

I think, maybe, that they argue that you should just use AI anyway, since "everyone assumes everything is AI anyway." It's never terribly clear what that argument is, even though I see six or seven different versions of it every time I log on.

I hate to rain on that parade, but that's not why people think your post sounds like generative AI. They think it sounds like generative AI because you generated it with a tool.

Also, LLMs will make typos. In fact, you should probably hope for typos, rather than full-blown hallucinations, which it's still prone to with alarming regularity.

Grifting by any other name

Despite everyone's insistence that writing would become obsolete with the introduction of video, as it turns out, you can still make a living writing. Who knew?

Oh, wait, it's me. I knew.

I started writing for the web at sixteen. Twenty-three years later, aside from some brief excursions to work as a barista, a dog trainer, and to manage a bookstore, this is pretty much the only career I've ever had.

It's not tech startup money, but I did manage to put myself through school and buy a house with the money I've earned as a writer. And because so much of writing is freelance, that means there are a lot of opportunities to grab some decent side money if you so choose.

And that's where the problem lies. One of the biggest reasons you'll see so much generative AI on LinkedIn is that people are trying to quickly farm engagement.

Engagement means you get views, and views directly correlate to those sweet, sweet advertising dollars. The problem is, if you're an editor for a publication seeking original content from aspiring writers, you don't want AI-generated slop.

It reads like a C-average 10th grader wrote it and it makes people trust your publication less. That's a huge reason why AppleInsider has a no generative AI content rule in place.

As Mike has said a few times now, "AppleInsider is human content, written by humans, for humans." And, he's got an extremely low tolerance for folks that accuse a post of using it, which in our experience has more to do with the commenter not liking what's being said, more than the generation method.

And it's not just the freelance writing market either. Amazon has been flooded with generative AI e-books that clog up servers and very rarely get purchased by disappointed bookworms.

Here's a pro tip for you from yours truly: If someone is offering a master class on how to earn money with AI-generated content, it's a safe bet that almost no one is making money with AI-generated content.

Remember, kids: those who can, do; those who can't will try to rob you under the guise of having your best interests at heart. Keep your wits about you and your hard-earned dollars in your wallet where they belong.

AI-generated content is flooding the internet in untenable amounts. We're seeing the zombification of online journalism continue forward unabated.

It's frustrating to see as a writer, and as a person who just genuinely enjoys reading. If all writing sounds the same, what's the point of reading any of it?

I think that's what a lot of the Big AI companies are learning. People don't love reading AI slop.

Maybe the market will eventually self-correct, and the algorithm will learn to deprioritize generative AI. I mean, I doubt it, considering that Google is a main player in the AI space, but a girl can dream.

Until then, I hope you like slop, because there's so, so much more where that came from. It's slop from the top all the way down to the bottom.

And even if the market does correct, you can bet money that the artificial intelligence market is going to try to adapt.

Which brings us back to Grammarly.

The ghost of experts past and present

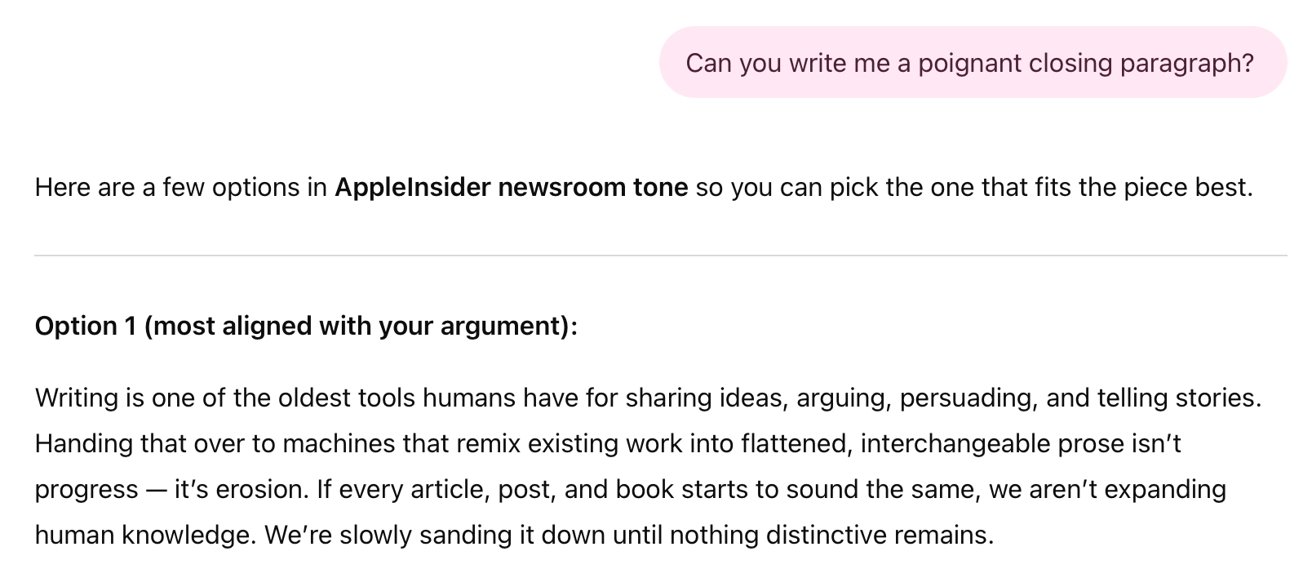

As I said before, I think Grammarly knows how bad generative writing sounds. The "expert review" feature clearly was designed to prompt users — both actual writers and AI toadies alike — into ripping off writing that does well.

These writers weren't asked, and the argument that Grammarly's CEO made was, more or less, "Well, their writing is already publicly available." I'm not entirely sure how much water that argument holds, considering we're still trying to figure out if LLMs can ever be ethically trained in the first place.

Grammarly doesn't see what writers do as talent or skill. It sees it as a marketable feature that can be easily calculated and spit back at customers who have to pay anywhere from $30 per month to $144 per year.

"We know people hate AI written slop, but our AI actually helps remove slop." Yeah, sure, buddy. It absolutely does not, and just replaces it with their flavor of slop.

Grammarly does not care about writers, it only seeks to maximize profits in any capacity. If anything, these meddling journalists who spoke out are standing in the way of that money.

And after that push-back, and the "pause," the CEO was still tone-deaf about it.

The CEO has implied that expert review will be back, saying that the company wants to "reimagine the feature." He suggests experts will have control over if and how they're represented.

I'm willing to bet that if the feature relaunches it will still have the same problems. The problems might just not have cute little attribution tags.

Add Grammarly to the plagiarist list that contains Google, Grok, and the rest of the AI companies.

Millions of years of evolution and for what

This is a complex issue — far more complex than I can get into in one afternoon. I understand that for some people, their job may not let them "opt out" of using AI.

But my point is this:

Humans have likely been using language for 100,000 years, if not a bit longer. We have evolved and grown and achieved all of the greatest things we've achieved because we were born to think and argue and yap and share our ideas.

It seems like a huge step backwards to hand that gift over to a robot who, whenever possible, will turn it into a bulleted list with some sort of shitty, saccharine finishing line.

Thanks, ChatGPT, I could have said it better myself.