Gemini Intelligence has been announced just a month before Apple Intelligence's big moment at WWDC, but I have doubts about whether either company can deliver on its AI promises.

Google announced its new suite of AI features under the Gemini Intelligence banner during a streamed event on May 12, 2026. That's less than a month before Apple's WWDC 2026 event kicks off on June 8.

Surely, that isn't a coincidence.

Gemini Intelligence promises a whole lot, some of it similar to what Apple promised iPhone owners almost two years ago. Apple has yet to produce the personalized Apple Intelligence features that were revealed during WWDC 2024.

That brings us back to Gemini Intelligence and Google's claims that it will start to roll out this summer. The similarities to Apple Intelligence are clear, but with Google's AI head start, it's high time it started to execute on its promises.

For iPhone users peering over the fence, Gemini Intelligence appears compelling. There's a lot to like, but it's impossible not to look at it and wonder where Apple Intelligence is right now and why Google chose to announce it now.

Gemini Intelligence gets personal

Announced during The Android Show, it's no surprise that Google used its Pixel phones to show off Gemini Intelligence. The Pixels are Google's answer to the iPhone, giving it more direct control over everything.

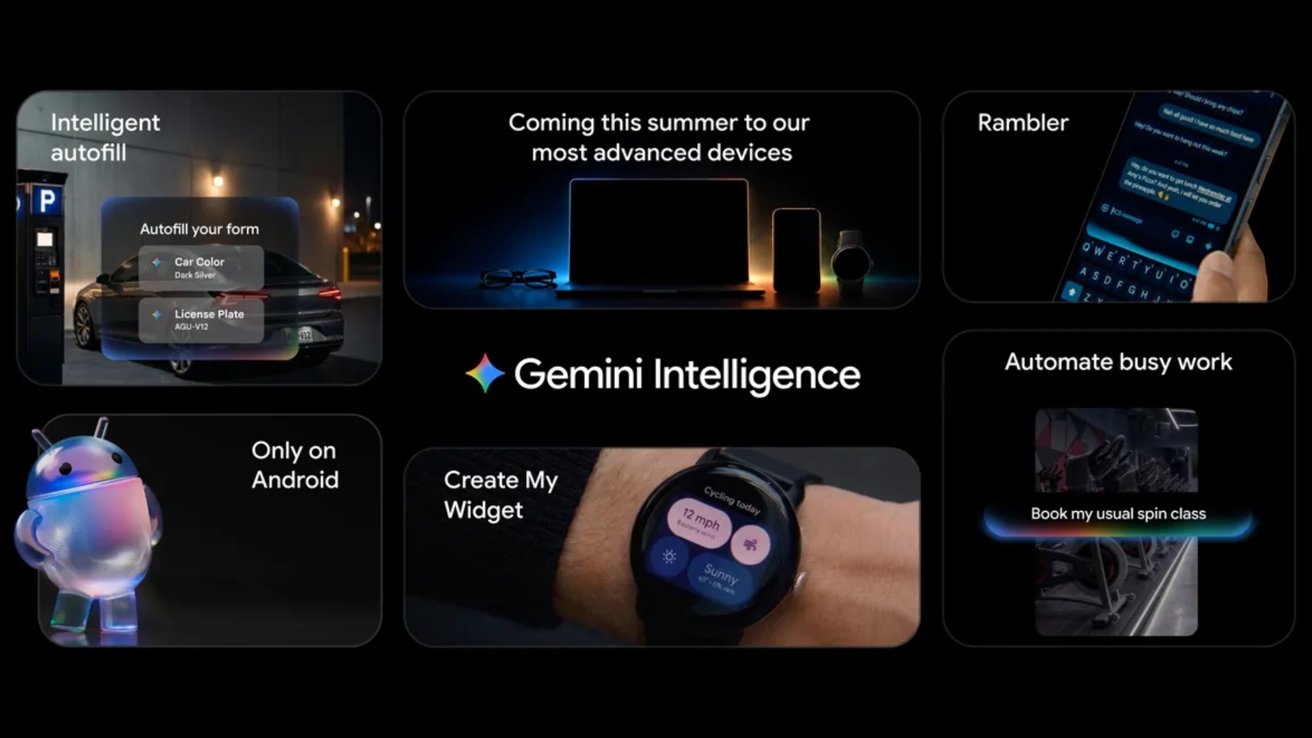

At its core, Gemini Intelligence is actually five distinct features. All of them use AI to try to change the way people use their phones, ranging from the mundane to the futuristic.

Starting at the mundane end of things, Gemini Intelligence promises to make it easier to search for things on the web. Chrome will use Gemini to summarize and compare content from different sources, while an auto-browse feature will book appointments and carry out other, similar tasks.

Android will also take the hassle out of filling out forms. Using Gemini's Personal Intelligence, Android will be able to fill in details across text boxes so users don't have to type them in themselves. Think of it as AI-powered autofill.

The next feature, Rambler, will allow users to talk to their Android phone and have it turn what they said into clear, concise text. Google is already pretty good at voice recognition and transcription, so it's no surprise that Gemini Intelligence builds on that.

In demos, Google used a text message scenario to show what the new feature can do. Built into the Gboard keyboard, users press a button and simply talk as if they were having a conversation.

Next, Gboard removes all of the "ums," "ahs," and "likes" as well as any repetition or tangents. The result is something that is more akin to a message you'd type, but without the typing.

One Gemini Intelligence feature that Apple should absolutely borrow is the ability to create custom widgets. Dubbed "Create My Widget," the feature can build an entire widget based on what the user wants it to display.

Examples include building a widget that only shows the wind speed and whether it will rain, rather than a full forecast. Another imagines someone creating a new widget that displays a new list of meal options each week.

Finally, we get to the futuristic feature, and one that Google led with when unveiling Gemini Intelligence. And it's the kind of feature that has the potential to be useful, albeit in limited situations.

That feature is multi-app automation, with Gemini handling tedious tasks so the user doesn't have to. Gemini will be able to book a spin class or arrange a trip via Expedia, for example.

Another example Google gave was a grocery list in a notes app. With the list open, an Android user will be able to long-press the power button and ask Gemini to get to work. It'll go off and build a shopping cart with all of the listed items, Google says.

Gemini Intelligence promises a whole lot. But like Apple Intelligence, questions remain whether anyone will actually use these features in the real world.

Limited availability

Google doesn't have the luxury of designing features for a single device. Whereas Apple can focus on the iPhone, Google has to consider dozens of different models in all kinds of form factors.

That's why, at launch, Gemini Intelligence will be limited to the latest Pixel and Samsung Galaxy devices. If you're using a phone from Motorola, for example, all bets are off.

As for timescales, Google says that Gemini Intelligence's various features will start to roll out to devices this summer. After that, it'll start to pop up on watches, cars, laptops, and even glasses. But details are still sparse on which ones.

Chasing Headlines or Changing the Game?

As I mentioned earlier, Google chose the month before WWDC to announce Gemini Intelligence. I suspect it was keen to get its AI features in front of people before Apple shows off its own AI upgrades.

Of course, those that pay attention to tech outside of Apple know that Google's tech conferences always take place before WWDC. Whether or not it was an intentional play to get some easy headlines ahead of Apple's annual developer conference remains to be seen.

All of the newly announced features make for great demonstrations, but Google's own history raises real questions about whether they'll still be around in a few years.

It's easy to wonder whether Google's new features were built to capitalize on AI buzz or actually be used. And we only have to look at its own history to see why.

Over the years, Google has announced various attempts at personal and proactive AI features. But not all of them are still around today.

If we go all the way back to 2012, we find Google Now. It was a feature of the Google app and was supposed to proactively deliver information before it was needed. There was even iPhone support.

Google Now would use contextual cards that displayed information when it would be most useful. Think flight information and commute times, for example.

Four years later, Google Now was dead, replaced by Google Assistant. Users found it to be too intrusive, offering up irrelevant information.

2018 saw the flashy unveiling of Google Duplex, an AI that would make phone calls for people. Users would tell Duplex what they needed, and it would make the call, using an AI voice to complete the task.

But the feature was littered with problems. It couldn't reliably deal with the way conversations could change course. Then came the legal and privacy concerns.

People taking AI calls would need to be warned, and there were questions over whether they would simply hang up. Ultimately, Google Duplex on the web was killed off in 2022, and Duplex tech has been rolled into other Gemini features.

There are other examples, too. But it's worth calling out two features that stuck around. And Apple even copied them for Apple Intelligence.

Hold for Me and Call Screen are both features that are available on Pixel phones today. The former waits in a hold queue for you, while the latter screens calls so you can avoid the ones you don't need to answer.

Some of Google's advanced features stand the test of time, while others don't. We'll have to wait and see how Gemini Intelligence fares.

Apple Intelligence is up next

Apple will unveil its new iPhone software during the WWDC 2026 opening stream on June 8. And with it, we expect Apple to share what's next for Apple Intelligence.

Much of what that will entail is likely to be features we've already seen, but Apple has yet to deliver. A new, more personal Siri is expected.

It was first promised for iOS 18 back in 2024 and has yet to launch. That delay even spurred some lawsuits and a class action settlement.

If the new Siri can do everything previously announced, it will have automation abilities similar to those of Gemini Intelligence. Siri will be able to work with and between apps while understanding context and what's on-screen.

Siri is also tipped to have a new, chatbot-like interface. Apple Intelligence will also likely extend to new photo-editing features, while Safari will use it to automatically group tabs.

One thing that Apple and Google have in common

But Apple and Google's roadmaps both face the same credibility test. And these Apple Intelligence changes have a familiar feeling, one that can be felt with Google's Gemini Intelligence announcements.

It's a feeling that's hard to shake: Apple Intelligence is set to gain new features that few will ever use.

Even if Apple Intelligence and Gemini Intelligence can do everything they claim and more, they will still have the same fundamental problem. Both will offer features that demo well and will wow users the first time they use them.

Then, six months later, many users won't even remember they existed.