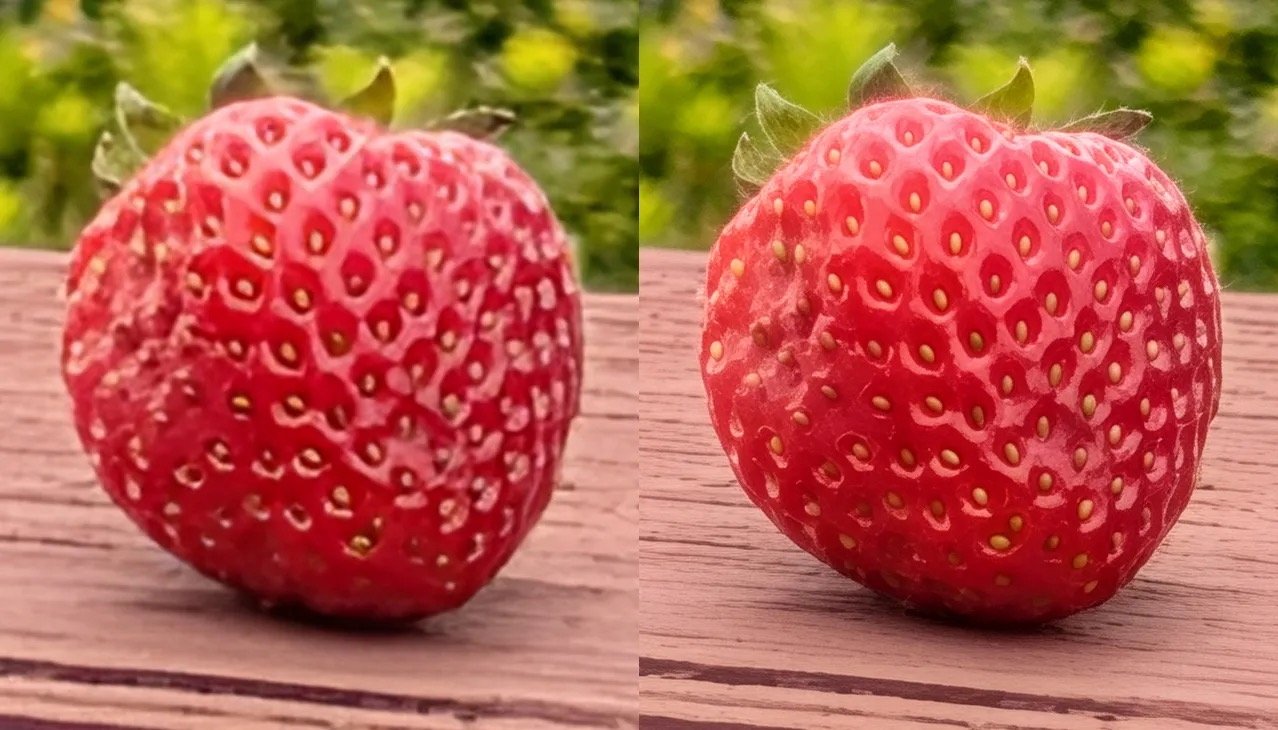

Stop us if you've heard this about flagship Android devices before — Google's new Pro-Res Zoom on the Pixel 10 Pro fabricates detail with AI instead of capturing it with the lens.

The Pixel 10 Pro, launched in August 2025, uses a 5x optical zoom lens. Beyond that, it depends on artificial intelligence. Google's "Pro-Res Zoom" applies a diffusion model to generate detail the sensor never recorded.

Google brags about 10x and even 100x zoom, but beyond 30x the phone isn't capturing reality anymore. It's spitting out AI guesswork.

Around the web, people have noticed something odd going on with Google's latest smartphone. Writer John Scalzi showed the effect in a post titled "Pictures, Not Photos."

It brings to mind the clash in philosophy between Android and iOS cameras. Google is comfortable making up detail with AI when the hardware falls short, while Apple shies from faking pixels that weren't really there.

How Google's zoom works

When the subject is simple, like fruit or bricks on a wall, the AI fills in believable patterns. But when the subject is complex, like letters on a sign, the illusion collapses.

Up to 5x, the Pixel 10 Pro relies on optical hardware. Beyond that, Scalzi notes, it crops into the sensor, then after 30x it uses AI to upscale. The process is closer to an art program than a magnifying glass.

The system has roots in earlier Pixel features like Night Sight and Super Res Zoom, which stretched the limits of small sensors. Pro-Res Zoom pushes further, moving from enhancement into invention.

Apple has leaned on computational photography for nearly a decade. The iPhone 7 Plus introduced Portrait Mode in 2016, using depth data and algorithms to blur backgrounds.

Apple's approach to photography

Every iPhone since has used machine learning to combine exposures, balance tones, and smooth noise. The iPhone 16 Pro continues that pattern.

The key difference is that Apple's algorithms only work with data the camera actually captures. The system may adjust a sky's color or reduce grain in low light, but it doesn't create new pixels from data that wasn't in the shot to begin with.

The company's guiding principle has been "realism and accuracy." Even ProRAW, Apple's most advanced format, is still tied to what the sensor saw.

The moon photo controversy

Samsung's "moon photo" scandal in 2023 involved Scene Optimizer recognizing the moon and applying data to sharpen it. The image was a composite, not photography.

Google's Pro-Res Zoom faces a similar issue with everyday subjects. Unlike the moon, no database can predict the unique shape of a street sign or power line, leading to faked images.

Apple avoids this issue by using depth information from the scene in Portrait Mode. While it involves manipulation, it's not fabrication.

For everyday users, the difference between a real photo and an AI-generated picture comes down to trust. With the iPhone 17, Apple will keep pushing features that make photos look better without inventing details that weren't there in the first place.

That means your shots will still reflect what you actually saw, even as algorithms clean up noise and balance exposure. It may not give you a magical 100x zoom, but it does mean you can trust your iPhone photos to be accurate representations of reality.