New papers on Apple's machine learning blog detail how AI can be used for faster, cheaper, and more effective QE testing, as well as for bug fixing and identification.

In October 2025, Apple published three new studies related to artificial intelligence and the possible applications of LLMs. The company's research work goes back many years, but some of its latest papers have concentrated on the flaws of AI, and how to prevent unwanted AI actions and hallucinations.

Now, one of its new studies explains how autonomous AI agents can be used for Quality Engineering (QE) tests, among other things. Its other two research papers focus on how AI agents can be used to fix and predict bugs in code after appropriate training.

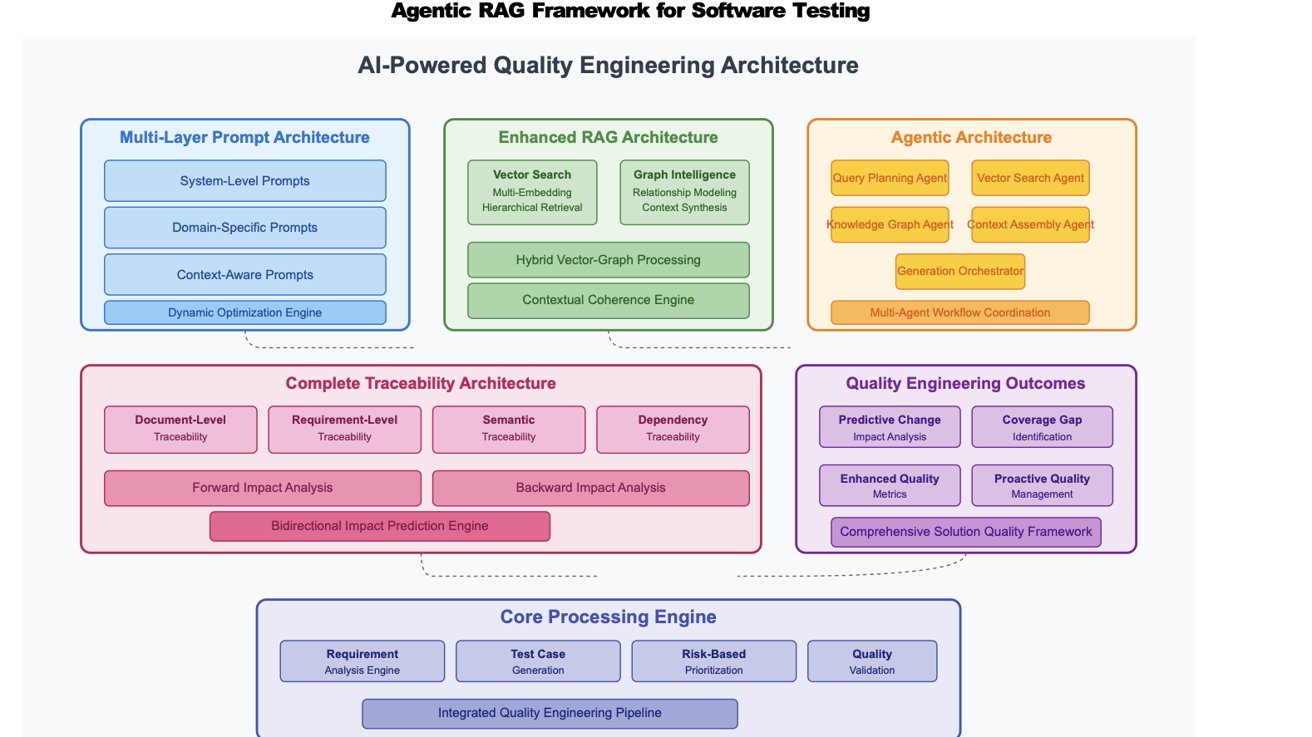

Agentic RAG for Software Testing with Hybrid VectorGraph and Multi-Agent Orchestration

In its first study, Apple's researchers point out the limitations and drawbacks of traditional QE test creation. Specifically, they explain that Quality Engineers spend 30-40% of their time making test plans, cases, and automation scripts manually.

The study proposes a solution — let AI agents do the necessary work instead. "Recent advances in machine learning for software testing have shown promise in automated test case generation."

However, this doesn't mean the best approach is to let a standard AI model plan, create, and validate Quality Engineering tests. "General-purpose AI systems lack the domain-specific knowledge required for effective software testing," the study reads.

Apple's researchers also point out that "existing systems fail to maintain comprehensive traceability through the testing lifecycle." The study offers a solution to this problem in the form of a complex, four-step Agentic RAG Framework, and a total of six AI agents for every aspect of the test process.

One agent, for instance, ensures regulatory compliance, another examines historical tests, while a third agent creates tests based on current methodology. There's also a conflict resolution agent as well as one that interfaces between modules and systems that are part of the test process.

In essence, multiple AI agents are used to create and manage QE tests, instead of an engineer doing everything manually.

The approach of Apple's researchers "demonstrates significant improvements in accuracy (94.8% vs. 65% baseline), productivity (85% time reduction), and quality metrics (35% improvement in defect detection)." It also ensures document traceability throughout the QE lifecycle.

Training Software Engineering Agents and Verifiers with SWE-Gym

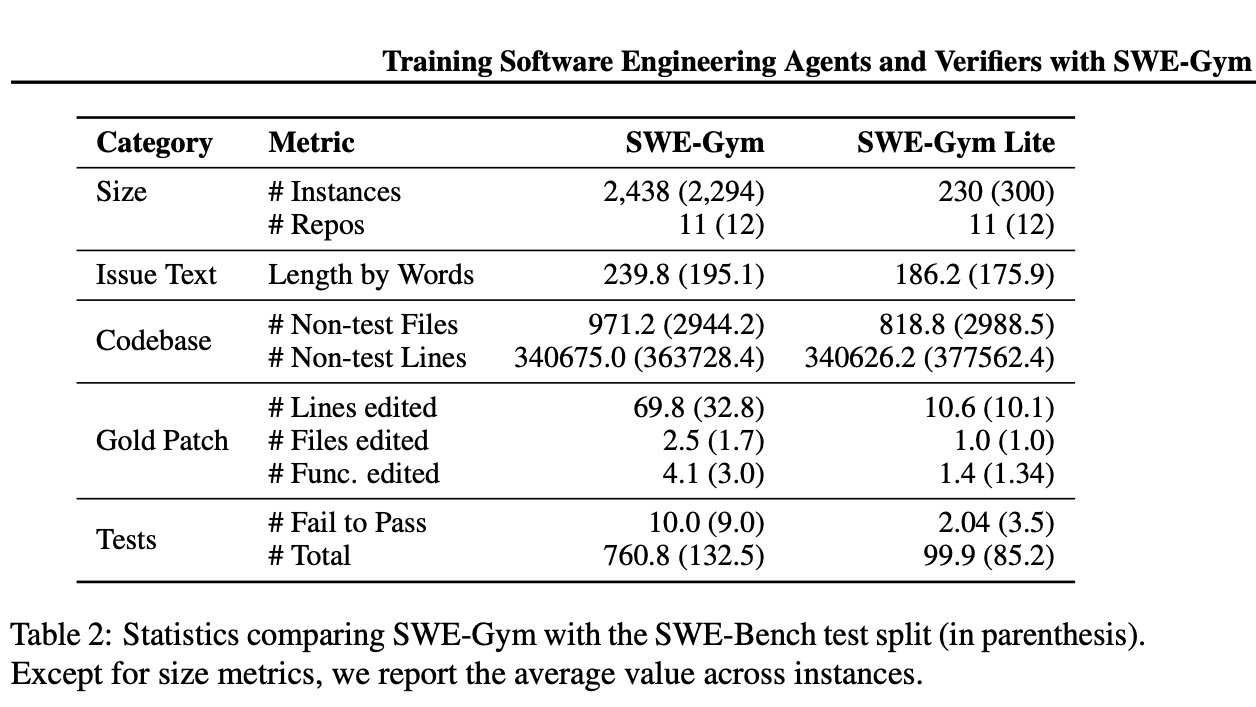

Another scientific study by Apple researchers, published in October 2025, explores the use of AI agents in resolving bugs in code. SWE-Gym is described as "the first environment for training real-world software engineering (SWE) agents."

Apple's researchers say that SWE-Gym is an environment for training real-world software engineering (SWE) agents. Image Credit: Apple.

It combines "real-world software engineering tasks from GitHub issues with pre-installed dependencies and executable test verification." Specifically, "SWE-Gym comprises 2,438 real-world software engineering tasks sourced from pull requests in 11 popular Python repositories."

The language model-based SWE agents are required to find ways of solving real-world GitHub issues, using the provided code bases and executable environments.

When an LM interacts with SWE-Gym, it learns to improve itself. Even so, Apple's engineers noted that "self-improvement results are modest."

Apple's engineers also created a smaller subset of 230 tasks. Dubbed SWE-Gym Lite, it contains easier, self-contained tasks compared to those in the standard SWE-Gym.

Language models trained with SWE-Gym managed to solve 72.5% of tasks correctly, while SWE-Gym Lite appears more useful for prototyping, as it yields results in shorter timeframes. Ultimately, Apple's researchers noted that there were "strong empirical results" demonstrating the effectiveness of SWE-Gym.

In terms of practical benefits, the study explains that SWE-Gym could lead to increased developer productivity across various industries. Apple's researchers explain that various research directions could be applied to SWE-Gym, including how to keep humans in the loop.

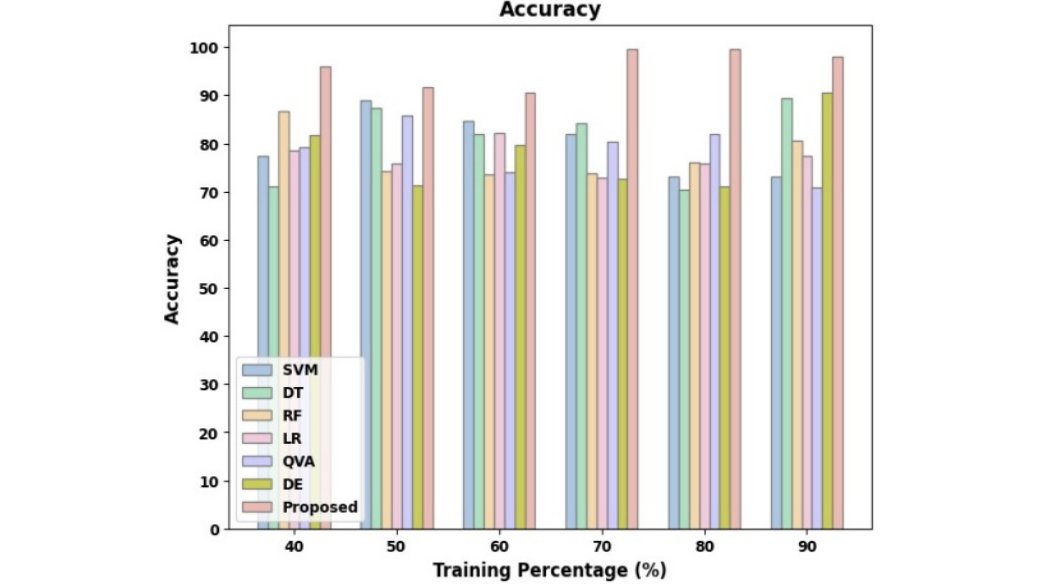

Software Defect Prediction using Autoencoder Transformer Model

A third Apple study emphasizes the same problem of manual testing conducted by QE engineers. The research paper, titled "Software Defect Prediction using Autoencoder Transformer Model," notes that having an engineer conduct tests manually is time-consuming, slow, and cost-ineffective.

Human error is, understandably, another factor outlined by Apple's researchers. Traditional AI-based defect prevention methods, meanwhile, only resolve issues once development ends, ignoring problems that could have been resolved much earlier.

Apple's researchers created a solution to this problem in the form of a new model. The study "introduces an AI-powered quality engineering approach that enhances software defect prediction using the ADE-QVAET model."

The two acronyms in the model name are "Adaptive Differential Evolution (ADE)" and "Quantum Variational Autoencoder-Transformer (QVAET)." Combined, they become ADE-QVAET.

The ADE-QVAET approach also includes "Adaptive Noise Reduction and Augmentation (ANRA)." The latter improves results by balancing defect instances and reducing noise.

ADE is described as an optimization technique, "which adapts the hyperparameters of machine learning models during training to enhance their performance."

QVAET, meanwhile, "detects accurate defects by extracting high-dimensional latent features while preserving sequential dependency."

By combining these two models and using ANRA to reduce noise, AI can learn to recognize software bugs and defects through training and pattern recognition.

"This research addresses existing model limitations by providing precise defect monitoring and improving software quality," the study reads. "Future AI-driven testing tools can be enhanced using deep learning and reinforcement learning to predict and prevent software issues even before development."

It remains to be seen if Apple will apply any of the knowledge gained from these studies to its existing products. Xcode 26 has recently gained support for third-party AI accounts, so the inclusion of Apple-designed code correction models wouldn't be a far-fetched idea.