Extremely poorly constructed AI apps available in the App Store are leaking the data of millions of users, a repository from a security research team has revealed.

The rise of artificial intelligence and connected services has led to a surge of apps in the App Store on the topic. However, that AI gold rush can lead to some extremely poorly made apps to be made, risking user data to make a quick buck.

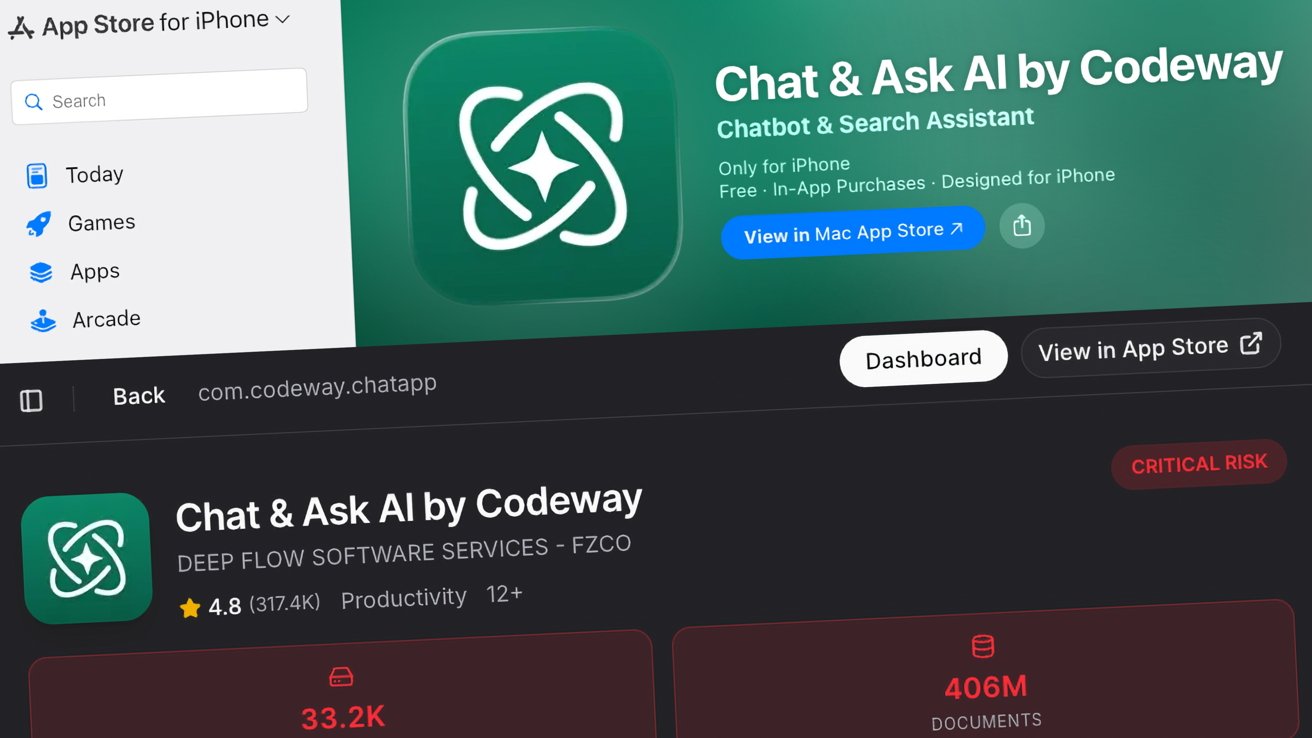

A new repository from security outfit CovertLabs is working to expose apps that have failed to secure user data properly. Known as Firehound, it is a public repository that catalogs the databases and files leaked out by apps, serving as a warning to developers to be more careful with user data.

The operation isn't entirely expansive, as it only lists 198 iOS apps in total at this point, but its subjects include many major examples where security wasn't really considered.

It also happens that many of the top-ranking apps on the list include AI-based services. Not all seem to be AI-specific, but there are quite a few on the roster at the top of the charts.

This includes the worst-offending app "Chat & Ask AI by Codeway," which has over 406 million exposed files and records. In a tweet, a security researcher behind the site known as "Harris0n" said that it includes the entire chat history of over 18 million users, totalling 380 million messages.

Considering the breadth and types of sensitive queries that people ask chatbots, that can be a lot of information that users certainly wouldn't want exposed to the Internet. Harris0n declared "The developers need to be held accountable for this level of negligence."

Responsible releases

The site does provide detailed analysis of data exposed by the apps, including details of data structures pertaining to chat messages and user details. While the structures are openly displayed, the files are not openly exposed to everyone.

Instead, the registry is limited in how much data is exposed, and it requires users to register to see it in the first place. Access requests are also manually reviewed, with members of law enforcement and security professionals given priority.

On first visiting the repository, a notice is displayed about "Responsible Disclosure," asking the developers of apps listed in the registry to get in contact with the team running it. It says the listing for the app will be removed, and guidance will be provided to help fix the situation.

An AI trust issue

The repository's listings are a reminder that not every app is created equal. A promising app can have an underlying failure to secure data, and can become a hazard for its users.

This is especially true at a time when users are more openly trusting the information provided by AI services.

In December, that trust was relied on in a new form of Atomic macOS Stealer (AMOS) attacks, which used search result ads for queries about clearing disk space in macOS. Victims were presented links to shared chatbot chats on ChatGPT and Grok, guiding users through the steps that ultimately infected their systems and leaked their data.

While the new repository isn't a failure of AI itself, it does demonstrate that users should be more aware of instances where their data is at risk from poorly-coded apps with lax security policies, if any. Just because it says AI in the title doesn't mean the app should be trusted at face value.

End users should remain vigilant about the apps they install and the kind of data that it deals with. While people should be wary about what they tell even major AI chatbots in the first place, they should be equally wary, or more so, about lesser known services that are more likely to be a security risk.