Apple is still working on ways to help Siri see apps on a display, as a new paper explains how it is working on a version of Ferret that will work locally on an iPhone.

The work by Apple to bring Siri up to speed with other AI systems usable on a smartphone is gradually accelerating. While immediate attempts to bring a new more contextual Siri to fruition isn't quite ready for primetime, Apple is still looking to the future for other updates it can do to its assistant and Apple Intelligence.

It seems that the path ahead is to focus on its strength: local processing of queries.

Ferret business

In 2023, Apple and researchers from Cornell University pushed an open-source multi-modal LLM called "Ferret." It was the creation of software that could use regions of images for queries, such as identifying what is in a drawn area of a photograph.

Half a year later, in April 2024, the work had expanded to a new version of Ferret-UI that could understand elements of a user interface. That is, an AI that could read a screenshot of a phone display, determine and read the important elements on view, and potentially interact with the UI of an opened-up app.

In a February 2026 paper for "Ferret-UI Lite," there is a natural evolution to create a version of Ferret that tries to fix a problem of previous versions. Namely that it relied on processing with large language models (LLMs) that were quite sizable, and not really designed for on-device processing at all.

Using these cloud-based LLMs made sense, because the planning and reasoning capabilities were considerable, ensuring great results. However, it still required the sending of data off to these cloud servers, when privacy and security advocates may prefer to see the data being processed locally.

While the team saw progress has been made both to produce GUI-based and multi-agent systems for the task, especially when trying to cut down the work required for agents to interact with user interfaces, there was still too much work to be carried out locally on a smartphone.

That prompted the creation of the new Lite version of Ferret-UI.

Thin and fast

The result, Ferret-UI Lite, is an end-to-end GUI agent that works across multiple platforms, including mobile, web, and desktop systems. That is, it's something that will work on a smartphone, like an iPhone, without too much trouble.

To accomplish this, Ferret-UI Lite is made with 3 billion parameters using GUI data from real and synthetic sources. It also increased inference-time performance using chain-of-thought reasoning and visual-tool use, along with reinforcement learning.

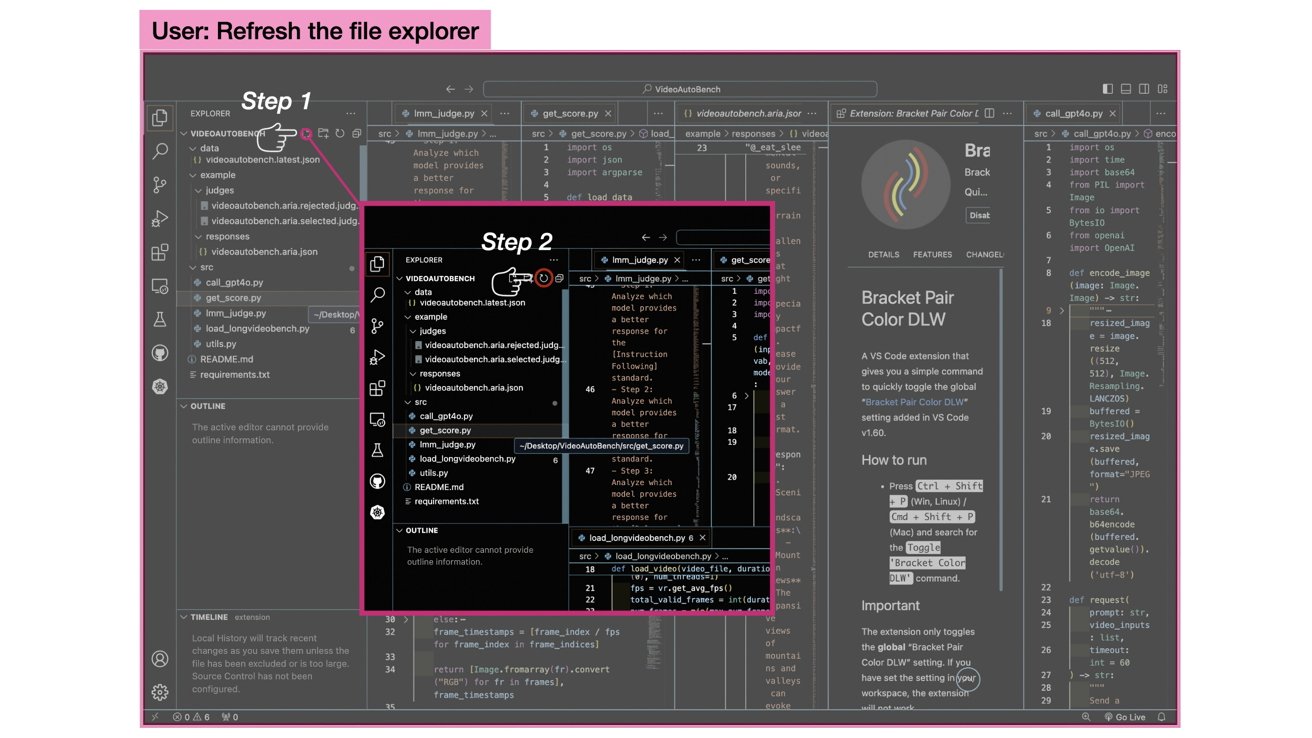

Cropping the screen image based on predictions to minimize how much data has to be analyzed, as shown in the Ferret-UI Lite paper

As an example of the ways Ferret-UI Lite works in ways that help locally-processed queries, a zoom-in mechanism is included to help analyze the image of the UI. The model produces an initial prediction, and the image is cropped around the expected location based on this prediction.

With less image to work with, it can focus more on what information is presented in that cropped region, allowing it to refine the prediction a lot more.

To the researchers, this apparently mimics human behavior in looking closely at something for detail.

Promising research

While the resulting Ferret-UI Lite isn't massively groundbreaking, the results that were attained are still pretty good considering it has been put against server-level LLM agents. In some cases, the team says it can outperform larger models.

In the ScreenSpot-Pro GUI grounding benchmark, the model achieves 53.3% accuracy. This is over 15% better than UI-TARS-1.5, a 7-billion-parameter LLM.

However, all isn't entirely great. In a GUI navigation task, Ferret had more limited performance versus larger models, but it was still on par with the UI-TARS-1.5 model.

Ultimately, the paper concludes that the experiment "validates the effectiveness of these strategies for small-scale agents," while also pointing out limitations. There's promise and challenge in scaling down GUI agents, and the team hopes the research helps inform future research attempts.