Apple just doesn't allow modern Nvidia GPUs on macOS Mojave, and this is a dramatic change from only six months ago. Given that a new Mac Pro is coming that could support Nvidia cards, and there are already eGPUs that should, it's time that Apple did.

As with anything Apple, there's a long history between the two companies. And, some bad blood.

First collaboration

The first Mac to include a graphics processing unit by Nvidia was the Power Macintosh G4 (Digital Audio), which was released in January 2001 and continued an Nvidia GeForce2 MX. Up to then, Apple had been using graphics cards made by ATI and this change was significant for more than just switching to Nvidia.

Rather than picking one manufacturer over another, however, Apple was actually choosing to work to the industry standard OpenGL. Doing so meant that it could freely switch between hardware from ATI, Nvidia or any other company that met those same standards.

So, it wasn't that Apple ditched ATI and in fact there was a 466 MHz model of the Power Mac G4 (Digital Audio) which had a 16MB ATI RAGE 128 Pro graphics card instead.

Still, with the exception of the iMac (Summer 2001) which had an ATI RAGE 128 Ultra, for the next two years, all Macs shipped with some Nvidia GPU. For 2003's Power Macintosh G4 (FireWire 800), Apple used an ATI Radeon 9000 Pro.

Problems

In 2004, the Apple Cinema Display was delayed and reportedly because of Nvidia's inability to produce the required graphics card, a GeForce 6800 Ultra DDL.

Then in October 2008, Apple had to admit that some MacBook Pros had faulty Nvidia processors. Back in July of that year, Nvidia itself had admitted problems though when AppleInsider asked, the company refused to confirm that its chips were causing the MacBook problems.

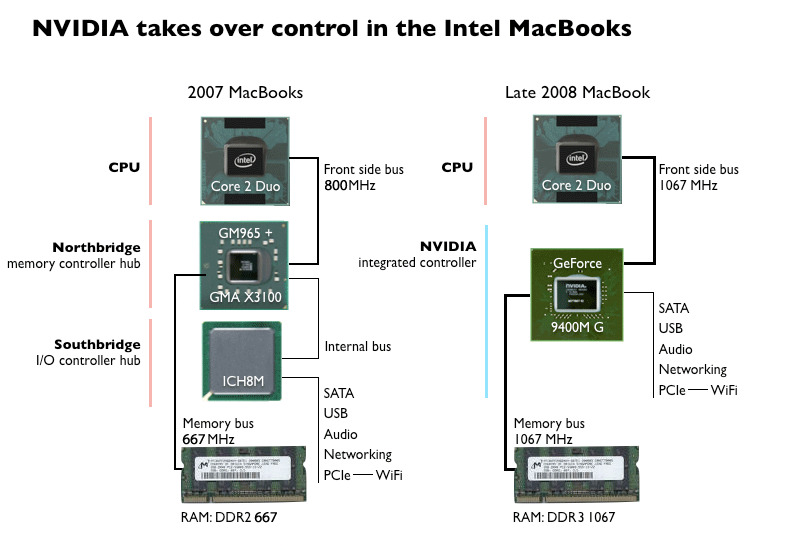

By this point Nvidia was doing more than straight graphics processing. It was also providing a way for Apple to integrate and connect these GPUs to the rest of the MacBook.

This substantially improved the graphics on the MacBooks — and got Nvidia into a legal battle with Intel. A technology lawsuit from Intel claimed that Nvidia's license did not allow it to make such competing, compatible chipsets. The case and an overlapping countersuit wouldn't be resolved until 2011.

While that legal battle may have been an issue that affected whether Apple would be able to use Nvidia processors in future, in 2009 there was also a report that the Cupertino company had dropped them anyway. Reportedly, Nvidia was accused of proposals that were "arrogance and bluster" and that negotiations with Apple were now extremely bitter.

Around the same time, the iPhone transformed the mobile computing market and meant phones now needed GPUs. Nvidia had been rumored back in 2006 to be what would power Apple's forthcoming product but that was using the Tegra processor which then didn't ship until 2009.

Instead of Nvidia or AMD — by then the owner of ATI — Apple went for Samsung processors and of course later developed its own.

At this time, Nvidia may have then believed that its own patents also applied to the GPUs in mobiles. The company tried to get companies to buy licenses for this technology and in 2013 then went as far as filing patent infringement suits against Qualcomm and Samsung.

If Nvidia tried getting Apple to pay its license fees then Apple seemingly said no. In 2016, it also said no to putting Nvidia processors in the 15-inch MacBook Pro. Instead, Apple went with AMD GPUs publicly because of performance per watt issues, but the real reason is anybody's guess.

That performance to power ratio is most significant for the GPUs inside laptops and Nvidia continued to make graphics cards that could be used as eGPUs for Macs. If you had a Mac Pro before the 6,1 cylindrical one, you could use the company's PCI-E graphics card internally with the Nvidia-provided web driver. Thunderbolt devices could attach one with a little bit of a fight that didn't improve when Apple supported eGPUs explicitly in the spring of 2018.

In 2017, Nvidia didn't deliver drivers during the High Sierra beta, which seems sensible. Instead, it waited to release updated drivers for the shipping version.

And now, in 2019, there aren't any functional drivers for Mojave at all. And, it's Apple's fault. The only two Nvidia cards that work with Mojave are the GeForce GTX 680, and the Quadro K5000 — both several years old at this point. And, this is only a light brush over the history between the two companies.

Nvidia cries foul

In October 2018, Nvidia issued as public a statement as it ever does. In a FAQ on Nvidia's developer site, the company said that Apple was to blame for the lack of web drivers for Mojave.

Developers using Macs with NVIDIA graphics cards are reporting that after upgrading from 10.13 to 10.14 (Mojave) they are experiencing rendering regressions and slow performance.Apple fully controls drivers for Mac OS. Unfortunately, NVIDIA currently cannot release a driver unless it is approved by Apple.

Our hardware works on OS 10.13 which supports up to (and including) Pascal.

We saw this note in October, and started asking questions. The "rendering regressions" and "slow performance" are because there is no real acceleration going on, and even performance in the "supported" cards is iffy at best — and took a hit in Mojave.

Inside Apple

What we found was support inside the Spaceship for the idea, but a lack of will to allow Nvidia GPUs. We've spoken with several dozen developers inside Apple, obviously not authorized to speak on behalf of the company, who feel that support for Nvidia's higher-end cards would be welcome, but disallowed quietly at higher levels of the company.

"It's not like we have any real work to do on it, Nvidia has great engineers," said one developer in a sentiment echoed by nearly all of the Apple staff we spoke with. "It's not like Metal 2 can't be moved to Nvidia with great performance. Somebody just doesn't want it there."

One developer went so far as to call it "quiet hostility" between long-time Apple managers and Nvidia.

For sure, somebody at Apple in the upper echelons doesn't want Nvidia support going forward right now. But, even off the record, nobody seemed to have any idea who it is. The impression we got is that it was some kind of passed-down knowledge with the origin of the policy lost to the mists of time, or an unwritten rule like so many in baseball.

Two years ago, pre-eGPU support, this block may have made at least a modicum of sense. Any Macs with PCI-E slots were aging, and the user base was dwindling through attrition alone. But, the drivers are available for High Sierra and are getting updated to this day — and we can testify that they still work great in a 5,1 Mac Pro, including the 1000-series cards.

The Nvidia driver can be shoe-horned onto High Sierra machines who want a Nvidia card in an eGPU. We're not going to delve into it here, but there is a wealth of information over at eGPU.io, if you're so inclined. And, don't upgrade to Mojave if you do so.

This decision makes absolutely no sense with eGPUs now being explicitly supported in macOS. They work fine in Windows, so it's not a technical limitation. Some tasks perform better on AMD, and some on Nvidia, it is a fact of silicon. There is no reason beyond marketing and user-funneling to prohibit use of the cards on a software level.

No, there aren't a ton of eGPU installs. Yes, a good portion of those users are fine with AMD cards. But, it is absolutely overly user-hostile to not allow Nvidia to release the drivers not just for future eGPU use, but for the non-zero percent of those users who are keeping the old Mac Pro alive. And if this is some kind of ancient Apple secret or preserved grudges that are preventing it, that's even worse.

And, it makes us worry what "modular" means for the forthcoming Mac Pro.