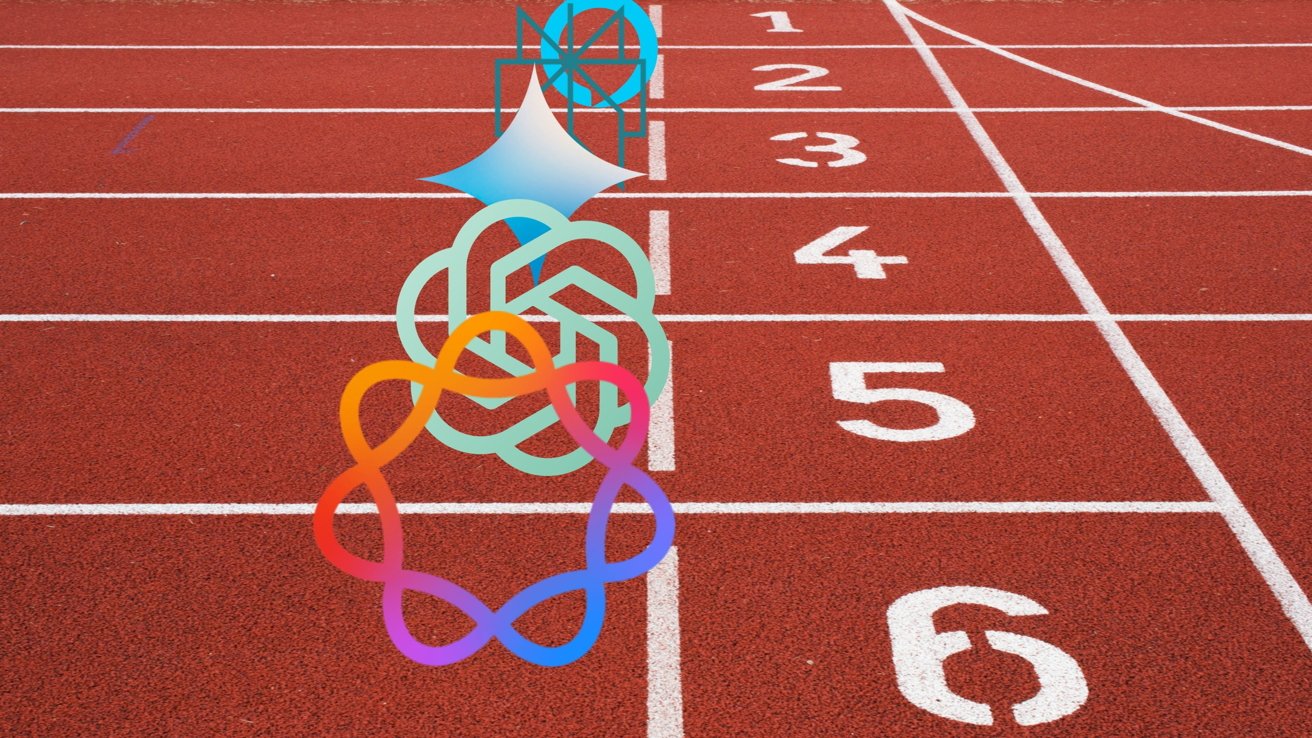

A group that includes Apple, Google, and others has sent a letter to the US Department of Defense concerning Anthropic's supply-chain risk designation, clearly concerned about how that might affect future tech contracts.

Anthropic took a moral stand against the United States government's request for unrestricted access to AI tools. The Trump administration retaliated by ordering all government entities to stop using Claude and designated the company as a supply-chain risk.

The designation is usually reserved for foreign national entities that pose a threat to United States infrastructure.

The Information Technology Industry Council (ITIC), which consists of many companies like Apple, Microsoft, Google, Nvidia, and others, sent a letter to the Department of Defense concerning the supply-chain threat designation. According to a report on the letter's contents from Reuters, Anthropic was not directly mentioned, but instead concerns were presented about the wider implications of the designation.

"We are concerned by recent reports regarding the Department of War's consideration of imposing a supply chain risk designation in response to a procurement dispute," the letter said.

Many of the companies in the ITIC are working on AI tools and other technologies that can and are expected to be licensed by government entities. If the supply-chain risk designation can be used punitively for not following through on government demands, it could create problems.

Government employees have reportedly suggested that it is a challenge to purge Anthropic from all government entities, which includes offices, schools, and military installations. If such a designation were handed down to thoughtlessly to any company that refuses to comply with a request, it could prove catastrophic to global supply chains and the tech companies.

Anthropic's Claude is also heavily used by tech companies like Apple. If a member of the ITIC uses Anthropic technology while trying to get a government contract, it may prove a problem.

The Department of Defense didn't share how it would respond, just that it would.

The government has options

OpenAI, which is partnered with Apple for direct access to ChatGPT via Siri, jumped at the opportunity to fill the void left by Anthropic. Mere hours passed between OpenAI's pledge to bring ChatGPT to the Department of Defense and the United States bombing Iran in what the President has classified as a war.

It isn't clear how OpenAI's ChatGPT might be deployed for the US government. Anthropic's moral and ethical stance was against the government's request to use AI in autonomous weapons and domestic mass surveillance.

Apple's reliance on OpenAI dissolved when it announced that Google Gemini will be used to train Apple Foundation Models. The old ChatGPT connection remains in various places across Apple's ecosystem and is still addressable.

There is no word on whether Apple plans to remove those integrations or continue allowing them alongside the newly enhanced Siri and Apple Intelligence later in 2026.

To complicate matters, OpenAI is a member of the ITIC. The letter expresses concerns about the government's actions even as OpenAI plays a role.

Things may become quite difficult to navigate as the US government decides who to punish, who to support, and how to respond.

The Department of Defense does have one option that it is already working with integrating — Elon Musk's Grok. xAI is not a part of the ITIC.