Despite recent headlines screaming that Apple is behind, or caving in, Siri won't be "powered" by Gemini, ChatGPT, or anything other than safe and secure Apple Foundation Models. Here's what's actually happening behind the inflammatory headlines.

It might be tempting to assume Apple has lost its way, chased off all of its talent, and has been left scrambling for AI solutions, especially if you read the headlines. If you've been following AppleInsider, then you're more prepared for what is actually happening at Apple and what is coming in the spring with the Siri LLM relaunch.

The tech world and its investors went wild when artificial intelligence entered the scene. We were promised revolutionary technology that was only previously dreamed of in sci-fi — stuff capable of curing cancer or ending human civilization as we know it.

In the years since, we've got a few useful tools, increased human productivity in select cases, and a puffed up part of the global economy at the expense of others on a series of increasingly complex lies told by tech companies. People profit on bad news about Apple, good news for its competitors, and the company's supposed struggle to become relevant in the global tech AI grift.

The reality is a story we've heard told, and AppleInsider has written about many times before. Because of Apple's late entry into a saturated market and unwillingness to develop in public, the company is failing, scrambling, and unable to do anything on its own.

It hasn't been true before and isn't now.

Let's discuss, yet again, what Apple's plans for Apple Intelligence actually are.

An LLM-based Siri

Apple's approach to artificial intelligence seems to have a few tiers to it. First, the foundation will be the Apple Foundation Models which also power the newly LLM-backed Siri.

Some might think Apple is about to make that LLM Google Gemini, but that isn't the case. The LLM powering Siri will be Apple's, and there is zero evidence suggesting otherwise.

Today, Siri works as it always has. It processes a request using decision trees to arrive at an end result or action.

It is classic machine learning, which has its advantages and disadvantages. ML can't hallucinate because the inputs and outputs are finite, but that means language has to be precise and functions have to be purpose-built.

The LLM-based Siri will be able to parse a wider set of commands to arrive at the same function or result. Users won't need to delicately put their commands together to make Siri understand.

However, Siri won't be a chatbot. While it is likely to retain a recent history of chats and a wider understanding of the user — think memory like ongoing ChatGPT chats can have — Siri likely won't function as a traditional chatbot.

So, right off the top, using Siri should be more natural and simple for nearly every command. Apple will likely utilize specialized agents built with the Apple Foundation Model to simplify command strings for things like playing music or controlling the home.

As others have noted about Alexa or Gemini, having a conversation to get a light to turn off is downright frustrating.

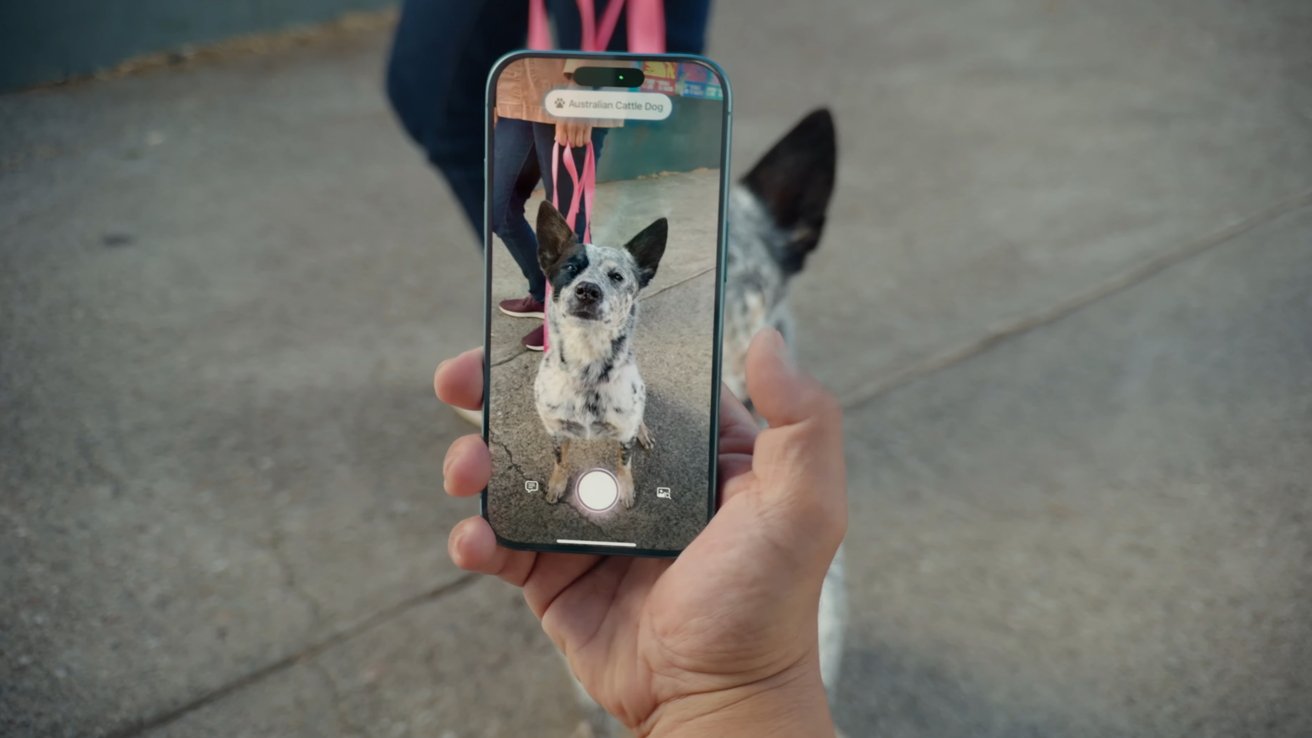

The superpower that the LLM-based Siri will have, and the true game changer, is access to a revitalized app intent system. App intents have existed for a long time in iOS, but developers can now basically draw a map to every function within their apps for Siri to follow.

If it exists on your iPhone, theoretically, with app intents Siri will be able to access that information, toggle a control, or perform a complex task with a phrase. Entire Shortcuts will be able to be built and saved with a phrase.

Apple is also building in support for Model Context Protocol (MCP), which will let other external systems tap into app intents. Of course, Apple will likely ensure data privacy is maintained no matter how these systems are accessed and used.

The fully voice-controlled computer will be realized in a way that no other competitor has been able to implement. Apple's full control over its hardware and software is what will let this system work.

Third-party models in Private Cloud Compute

On to third-party integrations. For whatever reason, analysts have been taking issue with Apple's desire to work with third-party AI companies on integrations.

The same people that cry for interoperability and openness are calling Apple's attempts to bring industry-leading AI to its platform a band-aid. Even though they say the words, they don't seem to understand the implications of what it actually means to bring third-party models into this mix.

While we don't have all the details yet, it appears that Apple has approached several companies about using their models. Those include Anthropic, Google, and OpenAI.

Nothing has been confirmed, but a report suggesting Apple is ready to spend $1 billion a year on a Google Gemini model is enough to make people think Apple has picked a single winner. What seems more likely is that Apple is looking to bring multiple models into Private Cloud Compute servers for users and Siri to access.

What this means is a query can be made via Siri, and if it needs what Apple calls world knowledge from the web, that query can be passed to Gemini. However, it isn't the Gemini sitting in Google's servers waiting to train on every drop of user data.

Instead, a version of Gemini would exist within Apple's Private Cloud Compute server. The user's query and any included data would be passed, used by the Gemini model, then discarded.

It would mean users could access the world's most popular AI, regardless of which company made it, via Apple's servers. Data privacy would be preserved, and as a bonus, all AI queries made by Apple Intelligence, Siri, and the Private Cloud Compute third-party AI models would be powered by renewable energy.

An unfortunate delay

Apple pressured itself to roll out Apple Intelligence before it wanted to, that much is clear. Many features, like Photos Clean Up, were rebranded as AI when it was clearly an evolution of previous ML implementations.

Writing Tools, Summaries, and Image Playgrounds were slim pickings at best. Of them, Writing Tools were the only one to perform anything particularly notable for users.

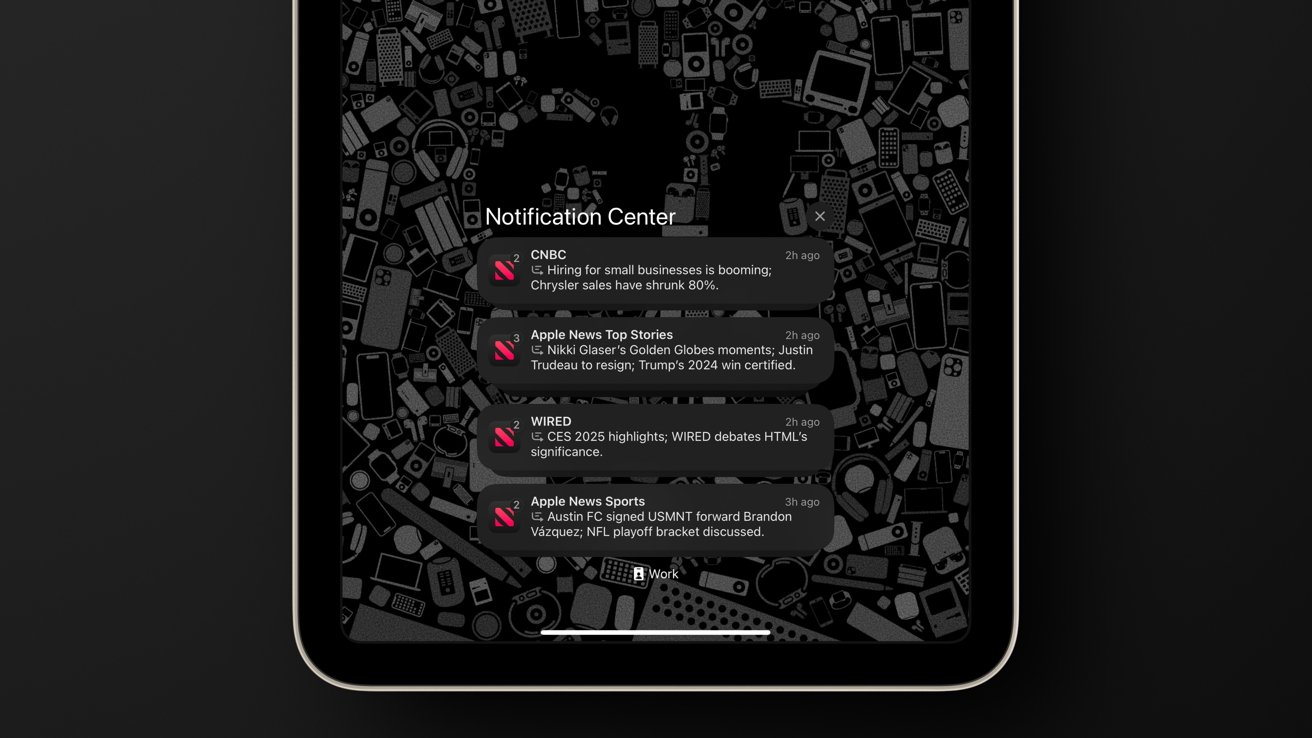

For me personally, I saved money using the Proofread function in Writing Tools instead of Grammarly. That and Notification Summaries, email summaries, and Messages summaries helped save time and ease triage.

These aren't big and flashy features. No one is losing a job over Apple Intelligence — which is why investors panned the initial launch.

Apple's promise of app intents integrating with Siri via Apple Intelligence didn't happen in early 2025. The feature was delayed because Apple wasn't happy with the hallucination rate and hybrid implementation.

So, instead of releasing non-functional software trash like so many other companies have done to check that "it has AI" checkbox, Apple punted. While it has been silent on what is coming in early 2026, it is coming, on time, and what it has built is something only Apple could.

Brain drain only in name

Of course, since Apple missed its initial launch of a new more powerful Siri, the headlines reflected that Apple was behind. The world was ready to move beyond Apple because of its lack of compelling AI, or at least, that was the narrative going around.

The iPhone 16 and iPhone 17 sold great, either in spite of or because of Apple's lack of emphasis on AI. Even so, analysts still say the current state of Apple Intelligence is a weakness without much evidence to back that up.

Meta doubled down on the lie, having given up on its namesake — the Metaverse. It was determined to spend billions to be the first to the still fictitious "superintelligence."

Billions spent later, and a dozen poached from Apple itself, and Meta is bleeding money with no evidence of progress. Investors are losing patience, especially with Zuckerberg haphazardly draining the coffers for a fantasy.

Google's Gemini has had some impressive demos, but like with Alexa's poor AI launch, the reality is almost always a lackluster, overly-chatty bot that won't turn your lights off. In specific instances, when used as a proper tool, these AI products perform some killer functions, but they're still not the paradigm-shifting AI the grifting CEOs promised.

Yet, even as these companies shovel more and more slop into the world, people look to Apple asking when their slop machines would arrive. They ignore innovations in design, chip architecture, battery technology, and camera performance to call Apple boring and behind.

Without a chatbot for consumers to date or ask inane questions that used to be answered by human writers via a search engine, Apple was "behind." CEOs promised AI would vibe code apps out of thin air, and while that doesn't exist, Apple is behind for not doing it already.

Even so, it is clear what Apple is working on and what's coming in 2026, and it is interesting.

An ethical AI ecosystem

Apple is expected to announce all of these initiatives across 2026, if not all at once in the spring. If we're reading the tea leaves correctly and this is how it is all implemented, Apple will have an incredible AI ecosystem on its hands.

The conversation will shift and it will no longer be about when what launched and who's ahead. Instead, it'll be about who is providing a useful service, and that's when Apple will step in with a safe, secure, and green AI ecosystem.

The AI bubble will pop, and when it does, a lot of the smaller entities will disappear. It has always been thus, and the lack of many safeguards and AI companies complaining that it is too hard to pay content creators are just now starting to hit some judicial roadblocks. AI money isn't eternal.

Apple's money effectively is, though. They can wait.

The core technologies are quite useful and will endure. Apple will have an incredible offering that will be very difficult to match.

There is no fixing the damage developing artificial intelligence did by scraping every piece of content from the web. But at least if it must exist, it can operate in a way that is private, secure, and on renewable energy — at least for Apple users.