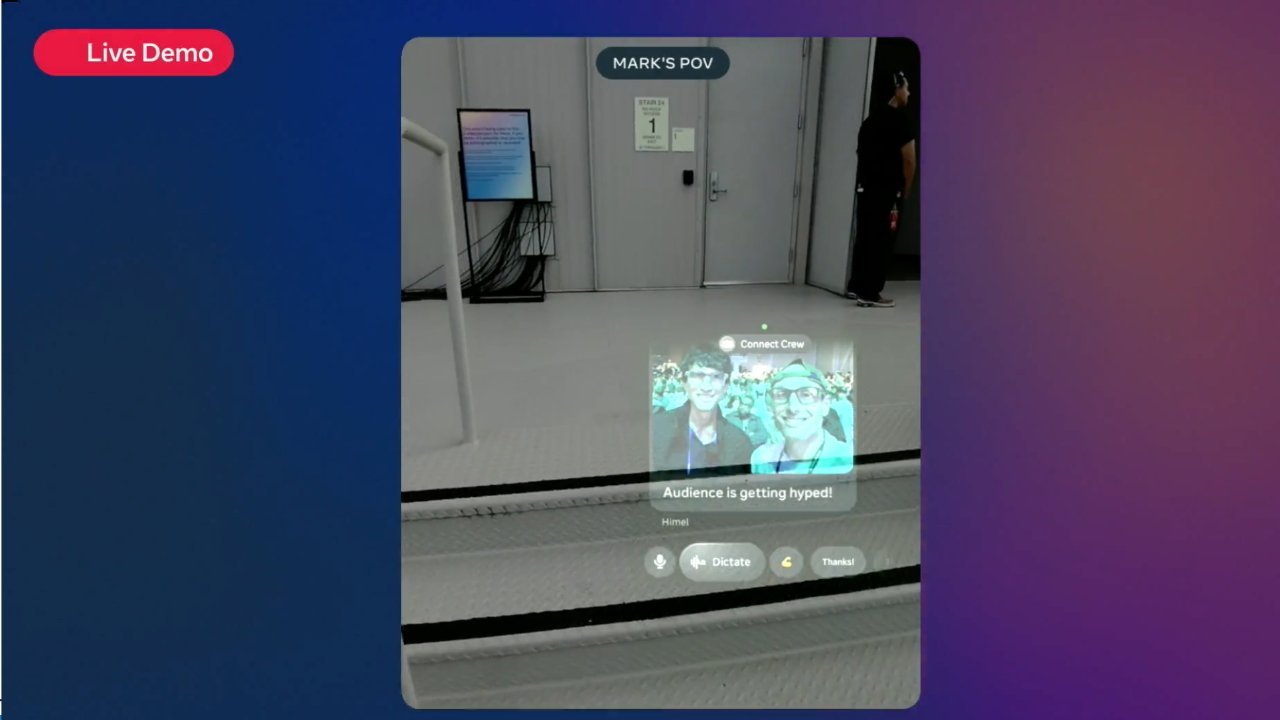

Meta's Ray-Ban smart glasses are a privacy nightmare, with footage of naked people, sensitive information, and violent acts captured and seen by Meta's AI and an army of employees.

Smart glasses are a trendy item that relies on cameras to feed AI models and to answer queries. However, while this is viewed as a fun feature by many and a way to film their life in a hands-free way, it's still a massive privacy issue if not used correctly.

An investigation by Svenska Dagbladet on February 27 looked into the workings of the Meta Ray-Ban partnership and its AI glasses. It found that the footage is collected and seen by many eyes, including human trainers of AI.

Anonymous sources from a company in Nairobi, Kenya revealed that they have seen footage of people at their most private and vulnerable — times when the users of Meta's smart glasses would rather not have the cameras rolling.

Moderation reality

Sama is a Kenyan subcontractor of Meta, supplying workers who can identify things in images and video to train Meta's AI. Thousands of people would draw boxes on screens, identifying items that are then fed into the AI system to improve its models.

One man told the report he could see people going to the bathroom or removing clothes. However, he admits that he didn't know if the users were aware of being captured, and that they probably wouldn't want the footage to be seen elsewhere.

Other employees also came forward to the report, detailing what they saw. One explained that they saw a man put the glasses on a bedside cabinet and leave the room, only for a woman to come in and change her clothes.

The videos shown to the data annotators also go further, including credit card details and sexual acts.

While some footage certainly could've been filmed on purpose by the user, it's thought that many instances were caught without the user or the video's subjects being fully aware. All in sensitive situations that would be compromising if they were leaked.

"Privacy"

The use of humans to train AI using data isn't anything new to the industry. Companies rely on teams of workers to try and sort through data and to correct accuracy issues, often in countries where it's cheap to hire small armies of employees.

However, Meta and others assure the public that their systems are private. At the very least, employees working on the supplied footage have to sign a non-disclosure agreement to work on it at all.

Indeed, the sensitive data that is seen by the employees isn't intended to train the AI models at all.

As for people appearing in the videos, there are anonymization systems in place to cover faces, but this is not entirely reliable. One former Meta employee explained that faces are sometimes visible, with difficult lighting conditions sometimes to blame.

As for what happens to the footage, Meta was asked about how long voice recordings and video clips were stored, among other privacy questions. It took two months for a response that only explained how data is transferred from the glasses to the mobile app, and referred reporters to Meta's AI privacy policy.

A repeating situation

Unsurprisingly, this is far from the first time that reports have delved into the human side of AI. Previously, Apple had to deal with such a scandal.

Back in 2019, Apple received widespread criticism after it was discovered that audio recordings from Siri triggers were sent to third-party contractors. Those contractors were working on improving the accuracy of Siri itself, and only in audio.

At the time, those recordings included private conversations between doctors and patients and drug deals, among others.

It's a headache that Apple has been dealing with for many years, including paying millions of dollars in settlements as recently as 2025.

With Apple reportedly working on a wearable AI pin and AirPods with infrared cameras, as well as its own Apple Glass, it may too find itself dealing with similar complaints. That is, if it hasn't learned its lessons from Siri.

At the very least, Apple is very insistent that itis handling such data sensitively.