A repeat rumor says Apple's smart glasses will rely on gesture-based input, with the device featuring two built-in cameras, Siri, and not much else.

Claims of Apple working on smart glasses date back to 2015, with analysts predicting a 2026 or 2027 release even before the Apple Vision Pro made its debut at WWDC 2023. Since then, we've continued to see new rumors about smart glasses development efforts, hardware, and features.

A MacRumors report has effectively reiterated previously rumored hardware claims, while also suggesting the device will support hand gestures. The claim about gestures aligns with what analyst Ming-Chi Kuo said in June 2025.

The report suggests Apple's smart glasses will also allegedly feature two cameras: a high-resolution camera for photos and videos, and a low-resolution camera for gestures and visual input tied to Siri.

Neither 3D cameras nor a LiDAR sensor will be available on these smart glasses, allegedly because the hardware is too energy-intensive. These limitations are reportedly due to the small on-board battery Apple plans to use to keep the glasses thin and light.

It's even been said that Tim Cook regards the product as a top priority, as part of a broader push towards AI-enabled wearables and Visual Intelligence.

An April 2026 report suggested that Apple's AI-powered smart glasses would be able to take photos and videos, and that they would offer access to Siri. October 2025 and February 2026 rumors suggested the initial version of the device wouldn't feature a screen.

As for how users will interact with the product, gestures are the obvious choice for multiple reasons. Rumors about the device, along with Apple patents and patent applications filed over the years, all point in that direction.

Why gesture support is obvious and necessary for Apple smart glasses

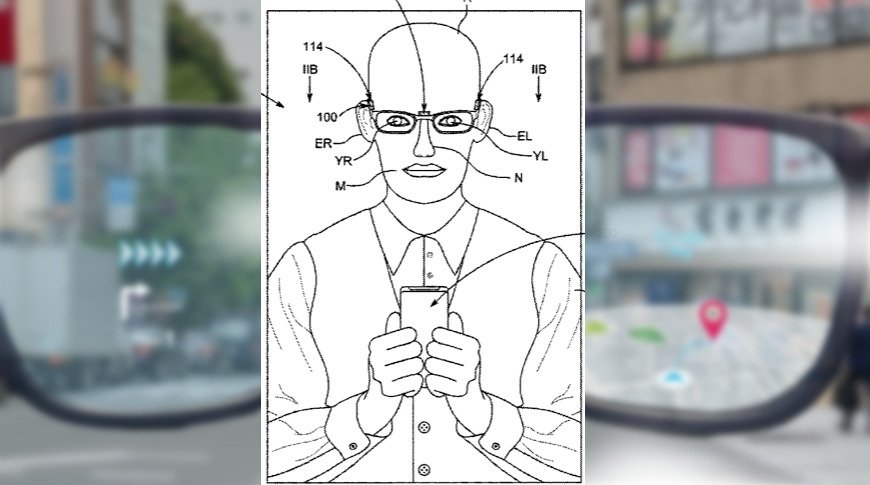

Given that the idea of using gestures to control smart glasses appeared in an Apple patent from 2020, it's not hard to imagine this approach becoming a reality, even without an "insider source."

Hand gestures, in particular, are the subject of a 2024 Apple patent application. It explains how users might be able to shop or get information about an object or landmark, simply by pointing at something in front of them.

The operating system of the Apple Vision Pro, known as visionOS, already relies on gestures for navigation. An Apple patent from 2024 suggested these navigation gestures might expand to other devices in the company's product lineup.

More recently, a 2025 Apple patent explored, among other things, how gestures might be used to control the long-rumored camera-equipped AirPods.

That said, Apple has been researching motion tracking since at least 2009, so references to gesture-operated devices may be found in even more patents and patent applications.

Given that Apple already has products that rely on gestures, that gesture-focused navigation has appeared in multiple patents and applications, and that the smart glasses will ship with cameras, there's only one conclusion to be made.

Apple's smart glasses will rely on gestures; that much is obvious, even without multiple rumors saying so outright. What's less clear, however, is when the product will reach end users.

It's been said that Apple is targeting a late 2026 release for its smart glasses, possibly around Christmas. However, a 2027 release isn't out of the question, either.

Apple will likely tout its new product as an AI-focused iPhone companion, so it might appeal to some users who are already part of the Apple ecosystem. Still, not everyone is a fan of smart glasses, regardless of manufacturer.